The MSPbots OpenAI Ticket Sentiment Analysis Bot is designed to analyze the sentiment or overall tone of a ticket summary. This analysis is useful when your MSP receives multiple tickets in a day. By returning negative sentiments and sending alerts directly or via messages in chat channels, the bot helps service managers and technicians to be aware of any negative sentiment in a ticket. They can then prioritize and work on such tickets immediately, ensuring prompt and efficient resolution.

What's on this page

Sentiment analysis is valuable in gauging customer satisfaction, improving service delivery, and maintaining a positive brand image. Using the OpenAI Ticket Sentiment Analysis Bot is beneficial in the following areas and more:

The sentiment from the MSPbots OpenAI Sentiment Analysis Bot is generated through the OpenAI integration using specific prompts and returns if a ticket's summary is categorized as any of the following:

Ensure that you have the following before you start using this bot:

Once the OpenAI integration is completed and verified, open the bot with the following steps:

After accessing the bot, click the ellipsis icon and click Clone to automatically add the cloned copy of the bot to the My Bots tab. For more information on cloning a template bot, refer to How to Clone a Bot Template.

To see the bot design:

You can tweak the OpenAI Sentiment Analysis Bot until it returns the correct result for your requirements. Do this by creating filters for specific Ticket Types, Boards, Statuses, and others on the bot blocks.

Please note that currently, the OpenAI Sentiment Analysis Bot is the only bot that is supported by the OpenAI bot block. We advise against using the bot block for any other purposes at this time. We are continuously working on developing new features and assets that will be available for the OpenAI bot block in the near future. If you have any questions or requests regarding the use of the OpenAI bot block, contact the MSPbots Product Team at product@mspbots.ai. |

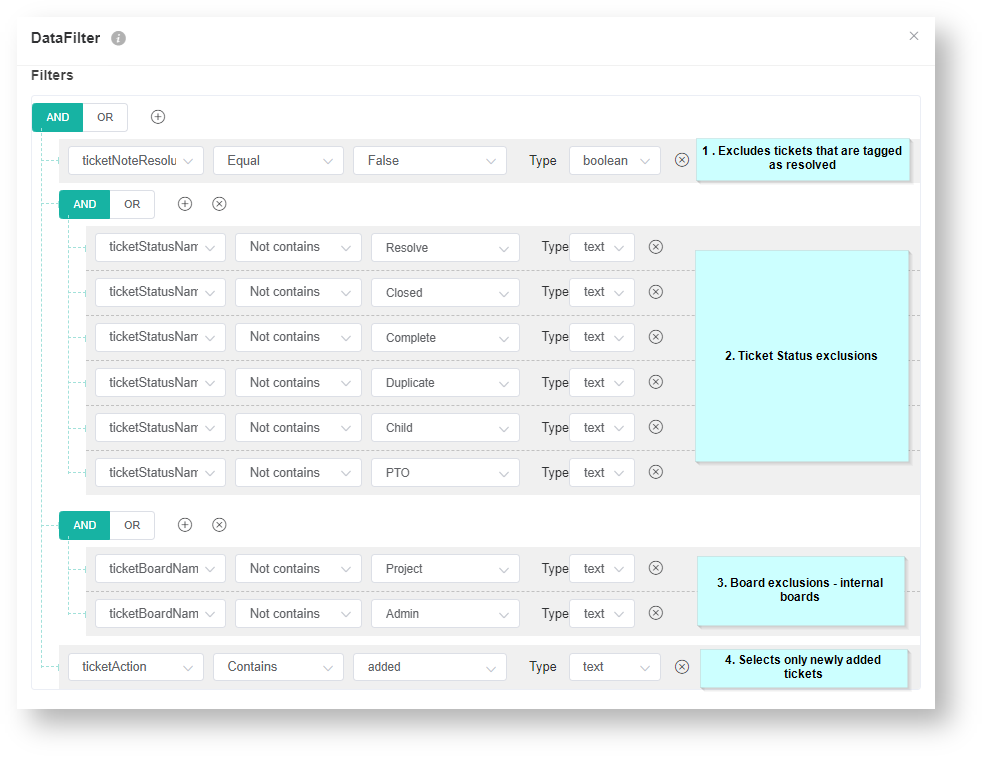

Below are the bot settings and filters for the OpenAI Sentiment Analysis Bot.

ticketNoteResolutionFlag ticketStatusName ticketBoardName ticketAction |

The DataFilter bot block window below shows how each filter works.

You can add and remove custom filters using the![]() and

and ![]() buttons. The other available filters are below.

buttons. The other available filters are below.

ticketCwUid, ticketCompanyName, ticketOwner, minutesTicketInProgress, priorityName, and ticketTypeName |

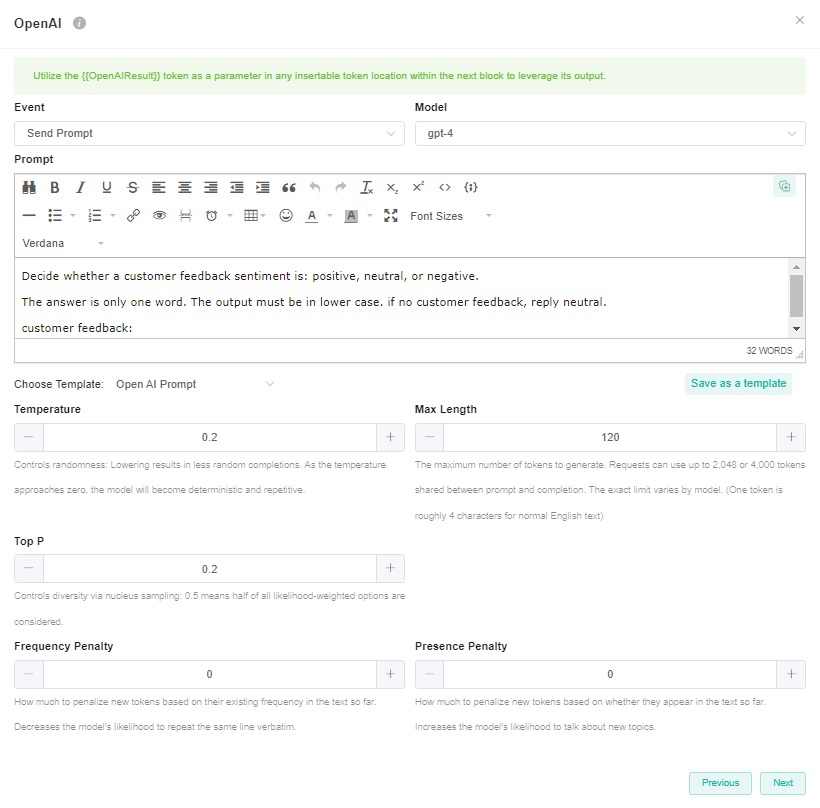

| Setting | Default | Description |

|---|---|---|

| Model | - | The Model setting is to select the OpenAI model you want to use. The options for the Model from the available models in your OpenAI integration, including GPT-4. If you haven't connected OpenAI integration to MSPbots yet or if MSPbots is unable to fetch the available GPT models from your OpenAI integration, the option will be empty. If you want to know how to connect OpenAI integration, please refer to OpenAI Integration Setup. If you have previously configured the Open AI bot block's model as GPT-3.5-turbo, then the model will still be GPT-3.5-turbo. |

| Temperature | 0.2 | The Temperature setting is common to all ChatGPT functions and is used to fine-tune the sampling temperature by a number between 0 and 1. Use 1 for creative applications and 0 for well-defined answers. Example: If you would like to return factual or straightforward answers such as a country's capital, then use 0. For tasks that are not as straightforward such as generating text or content, a higher temperature is required to enable the capture of idiomatic expressions and text nuances. |

| Max Length | 120 | Max Length represents the maximum number of tokens used to generate prompt results. Tokens can be likened to pieces of words that the model uses to classify text. 1 token ~= 4 characters |

| Top P | 0.2 | Top-p sampling, also known as nucleus sampling, is an alternative to temperature sampling. Instead of considering all possible tokens, GPT-3 considers only a subset or a nucleus whose cumulative probability mass adds up to a threshold which is set as the top-p. If the Top P is set to 0.2, GPT-3 will only consider the tokens that make up the top 20% of the probability mass for the next token, allowing for dynamic vocabulary selection based on context. |

| Frequency Penalty | 0 | Frequency Penalty is mostly applicable to text generation. This setting tells the model to limit repeating tokens like a friendly reminder to not overuse certain words or phrases. Since this is mostly not applicable to sentiment analysis, it is set to 0. |

| Presence Penalty | 0 | The Presence Penalty parameter tells the model to include a wider variety of tokens in generated text and, like the frequency penalty, is applicable to text generation as compared to sentiment. |

The pricing for OpenAI ChatGPT 3.5 Turbo is based on a per 1,000 token model. You may need to confirm the context through your OpenAI billing.

GPT 3.5 Turbo | Model | Input | Output |

|---|---|---|---|

| 4K Context | $0.0015 per 1,000 tokens | $0.002 per 1,000 tokens | |

| 16K Context | $0.003 per 1,000 tokens | $0.004 per 1,00 tokens |

For more information, refer to the OpenAI ChatGPT pricing page.